I recently spoke on a Health Leads webinar about AI and health equity. More than 350 people attended, representing healthcare, government, academia, philanthropy, and nonprofit organizations. These folks are working every day on some of the hardest problems in society: equitable access to healthcare, housing, food, benefits, and social services. What surprised me most during the conversation wasn’t the questions about AI. It was the level of fear, and the sense that AI was something happening to the social sector, rather than something we could help shape.

What I saw in the chatroom

During the webinar, I kept an eye on the chat, and it was one of the most active chatrooms I’ve ever seen on a public webinar.

What shocked me most wasn’t skepticism about AI… because skepticism and a critique of the AI systems are healthy. But what surprised me was how much fear and anxiety there was.

People were (rightfully) worried about environmental impact, bias, corporate power, reliability, job loss, surveillance. All valid concerns. All important conversations to have. But the tone of many comments suggested something deeper, like a sense that AI was something happening to us, rather than something we could shape.

I live and work in a tech bubble in San Francisco, and even some folks on my own team have shared similar fears with me. But I didn’t fully appreciate the severity and breadth of those fears until I watched that chat scroll by for an hour.

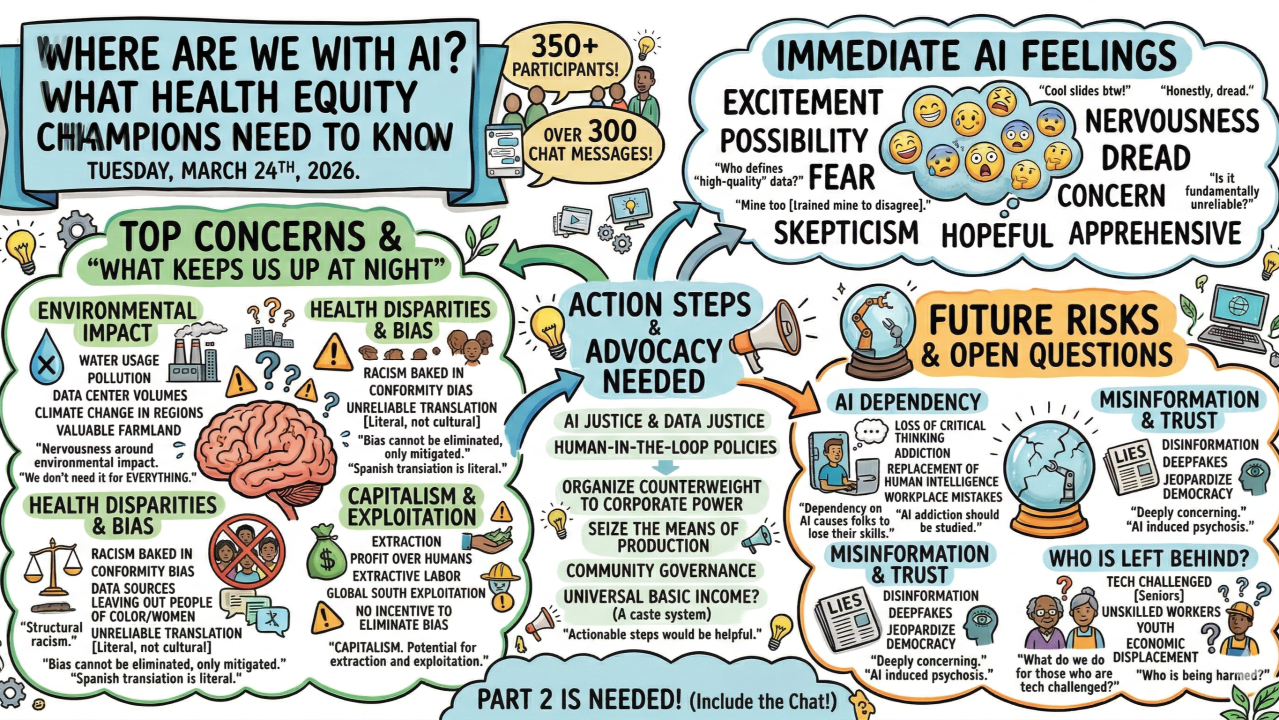

After the webinar, I prompted Gemini to create a visual graphic of the comments section, which turned out to be pretty interesting, and you can check it out above. Here are a few of the comments that stood out to me.

The fear of AI is not new

One person wrote, “I really wish that we could just stop the AI train. I think it’s incredibly destructive and could literally kill us all.”

It’s a familiar comment. Every major technological shift has produced similar reactions. When railroads were first introduced in the 1800s, doctors warned that the vibration of trains would damage the human body and cause serious medical problems (I actually referenced an 1862 Lancet article about this during the talk). When the internet emerged, many people believed it would destroy social relationships. When smartphones appeared, people worried about addiction and surveillance.

But in each case, the technology became core infrastructure. And society reorganized itself around it.

The same will probably be true for AI. The question has never really been whether a technology will exist. The question is who builds it, who controls it, and who benefits from it.

Fix the system, but people need help today

Another comment during the webinar said, “Instead of needing to rely on AI to navigate the system, we could just make the system easier to navigate and we’re choosing not to.”

I actually agree with that sentiment. Our current systems didn’t become complex by accident; they are the result of policy choices made over time. Affordability is a policy choice. Health equity is a policy choice. Administrative burden is a policy choice. We should absolutely work to make these systems easier to navigate and more equitable.

But that kind of change takes decades. It requires policy reform, funding changes, system redesign, and political will. In the meantime, millions of families are navigating fragmented systems right now. They are filling out the same forms over and over, waiting on hold, riding buses across town for appointments, and trying to piece together help from dozens of different organizations that don’t talk to each other.

Any tool that reduces paperwork, administrative burden, and time spent navigating bureaucracy can make a real difference in people’s lives today. That’s always been my stance, even back when I started One Degree in 2012.

The reliability debate

Another participant raised concerns about reliability, citing studies showing high error rates for some AI systems, and asked whether it was responsible to expose users to unreliable tools.

This is an important concern, but it also reflects a misunderstanding of how these tools should be used. I believe that AI should not be making final eligibility decisions or replacing professional judgment (at least not unless we reach a level of reliability that even humans rarely achieve). But AI can be very useful for moving and organizing data, summarizing documents, translating information, pre-filling forms, and reducing administrative work.

Social service and healthcare systems still have big barriers in the form of paperwork, intake forms, phone calls, follow-ups, and administrative friction. If AI can reduce that friction, it can free up staff time, reduce errors from manual data entry, and make systems easier for people to navigate.

AI is already here, whether we participate or not

Right now, while many of us are debating whether AI is good or bad, the technology is already being adopted at massive scale. People are using AI for legal advice, medical questions, benefits questions, housing questions, and emotional support, whether we think that’s a good idea or not. Chatgpt is already the largest single provider of mental health support in America!

The reality is that AI is not waiting for the social sector to be ready.

My organization, One Degree | 1degree.org, builds technology that helps people access food, housing, healthcare, and public benefits. We started using AI not because it was trendy, but because we had very real problems, like trying to figure out how to interoperate between multiple data silos of community resource information with different taxonomies.

What we’ve learned is that AI is just one tool in our toolbox.

Most of our platform is still built using traditional deterministic software, databases, rules engines, workflows, APIs. We use generative AI only where it actually solves hard problems. And a big part of our work now is simply learning: learning how to build with generative AI, how to push its limits, where it breaks, where it works well, and where it shouldn’t be used at all.

It’s important to understand these tools now, not because we want to use AI everywhere, but because we need to know how to harness it and apply it in ways that actually benefit people and move us toward more equitable systems.

AI is an infrastructure moment

If we are serious about equity, then we should care about who is building these tools, who controls the infrastructure, whose values are embedded in the systems, and who benefits from them.

Because if the social sector, public sector, and healthcare sector do not actively participate in building and shaping these tools, they will still be built — just by someone else, with different incentives.

This moment reminds me again of the railroads. The biggest impacts of railroads were not medical, as early critics feared. They were economic and social. Railroads connected some cities and bypassed others. Some towns grew rapidly, while others declined. Railroads raised huge questions about infrastructure investment, corporate power, and equity.

Technology, like rail systems, becomes infrastructure. And AI is in a similar kind of infrastructure moment.

What organizations should do right now

So what does that actually mean for organizations, clinicians, community health workers, and nonprofit leaders right now?

Most organizations do not need to build AI models. But every organization should be thinking carefully about how these tools will change their work, their workflows, and the people they serve. In many ways, AI tools are becoming like spreadsheets or shared documents, which are tools that not every organization builds, but tools every organization eventually uses.

The most important place to start is not with the technology, but with the problems. Instead of asking, “How do we use AI?” a better question is, “Where are we struggling to make an impact? Where are people dropping off? Where are we wasting time? Where is paperwork slowing things down?” AI is pretty useful right now for very specific operational problems.

It is also important to be careful about what decisions we automate. Using AI to summarize notes or help fill out forms is very different from using AI to determine eligibility or generate risk scores. High-stakes decisions should still involve human judgment and accountability.

Another lesson many organizations are learning is that AI problems are often actually data problems. If your data is incomplete, inconsistent, or biased, AI will simply amplify those problems. Investing in better data systems, data governance, and data quality may ultimately be more important than investing in AI tools themselves.

Organizations also need to invest in their people’s understanding of AI. Staff need training not just on how to use AI tools, but on when not to trust them, how to verify outputs, how to protect sensitive information, and how to think critically about where these tools should and should not be used. Many organizations will need internal AI policies and principles, just as they developed policies for email, data security, and cloud software over the past two decades.

And perhaps most importantly, the communities we serve need to be involved in the design and testing of AI tools. Otherwise, we risk building systems that work well for institutions but not for the people who actually need them.

A message for funders: get off the sidelines

For funders, I have one message in this moment: get off the sidelines.

Technology systems always reflect the people who pay to build them. This is a major technology shift that is going to change healthcare, social services, and how people access benefits and support. If funders do not engage, the future of AI in healthcare and social services will be built primarily by large vendors and institutions, optimized for compliance, billing, and efficiency (think EHRs, HMIS, CLR systems).

We don’t just need small grants for experimental AI tools around the margins. We need investment in community-owned digital public infrastructure, data systems, governance, and shared platforms so equity is built into these systems from the beginning, not added later as an afterthought.

Don’t let anxiety turn into inaction

And for everyone else reading this (nonprofit leaders, healthcare providers, social workers, policymakers), here’s my advice for right now: don’t let anxiety turn into inaction. Start learning. Start experimenting. Start asking questions. You don’t need to become an AI engineer (and TBH, you won’t need to be), but you do need to understand how these tools work and where they fit into your work.

Every major infrastructure shift reshapes society (railroads, electricity, highways, the internet). AI is likely in another one of those moments. The biggest questions are not technical. They are social, economic, and political. Who benefits? Who gets left out? Who controls the infrastructure? What systems are we reinforcing, and what systems are we changing?

AI is here whether we like it or not. The question is not whether we use it. The question is whether we help shape how it is used.

If we care about equity, we cannot only critique the future. We have to help build it.